My GPU's fans recently started to malfunction, which means it smells like hot failure in my office while I'm waiting on a new GPU to arrive.

In the meantime, if it's not under too much stress, and the room is fairly cool, and the case is open, things work fine. When one of those isn't the case, it overheats and abruptly stops working while the computer is on, which is highly concerning.

I have an nVidia GPU, which isn't supported by the standard Ubuntu MATE sensors applet package. More on this in the exciting conclusion. The nvidia-settings GUI application seems to have the correct temperature, and through some Googling I discovered the magic incantation:

nvidia-smi --query-gpu=temperature.gpu --format=csv,noheader

Which will output as a plain integer the temperature of my GPU in Degrees Celsius. Excellent. Neither the sensors app nor the system monitor, which shows me graphs of CPU, Memory, and I/O utilization, seemed to support a simple way for me to add my "sensor" as a new graph, so I had my goal.

How hard could it be?

MATE University

Some rough googling later, I found a git repos called MATE university under the worryingly named "mate-desktop-legacy-archive" organization.

Despite the fact that this repos has a python example, the fact that it contains a C example and is descendent from the GNOME folks means that it used automake/autoconf and is close to being illegible.

I have barely touched the -dev packages on my dev box since transitioning to writing mostly in Go for work and leisure, so I had to install the following packages to get autogen.sh working:

mate-common

libglib2.0-dev

libmate-panel-applet-dev

Seems standard enough.

However, after realizing that there were just 3 files involved in the Python applet, and the only use of thousands of lines of autotools was to fill in the "Location" in the service description, I decided to go looking a fat free example.

I eventually found another repos from user furas and a recent forum post, both of which were far more straight forward on what was necessary and what was convention.

The Applet

I created a basic repos for an nvidia-gpu-temp applet that I wrote. It shells out to get the temperature, determines a colour with which to display it, and then updates the label in the applet.

Getting this all to work was very frustrating, because it is not obvious how to get information for what wires you've crossed during the debugging process. Thankfully, Gtk has abandoned the bonanza of XML based configuration that it fell in love with in the early naughties for an ini style format, but there isn't much documentation on what is convention and what is required. Does my applet need to have "Applet" in the name?

In the end, the thing that tripped me up hardest was the fact that exceptions were not being caught and logged as I had expected. My attempts at setting up a callback for the mainloop to update the temperature display using Glib.timeout_add failed silently for half an eternity on two counts: I hadn't imported Glib, and it's actually spelled GLib.

Once this frustration was out of the way, things went pretty well. It's nice that Gtk is styled with CSS these days, and that the Label has a basic Gtk.Label.set_markup that lets you use HTML for styling.

The Penny Drops: mate-sensors-applet-nvidia

20 years ago, when I was using Linux, but was not comfortable with the ecosystem, I'd find something missing and write it myself. It would inevitably be a half-baked version of something that already existed.

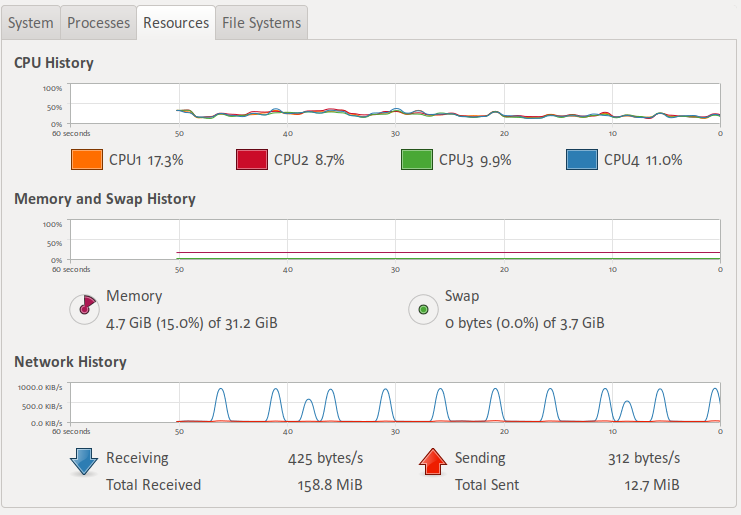

Of course, after writing the applet, I thought about a more general purpose type of applet that would be useful. The MATE "system monitor" applet has some really nice widgets:

And the graphs on the panel are attractive, too.

What if there was an applet that could graph values over time like this, in a smooth way, where those values could come from a custom command?

The system monitor applet is a standard applet, in a repos full of other ones, so I pulled up the source for the mate-sensors-applet instead to see if the graph widget was something standard and reusable.

Of course, this is when I learned that sensors had nvidia support. It's not compiled in by default in the ubuntu package, but the package page showed me the way to the mate-sensors-applet-nvidia package and.. it does exactly what I wanted all along.

To make matters even more irrelevant, my replacement video card arrived in the mail roughly 45 minutes after I got all of this working, making paying close attention to my GPU temperature something no longer of primary importance.

What did I learn from all of this?

The entire experience, plus writing this post, only took about ~4-5 hours, because I have some prior experience with nearly all of this technology. It turned out to be time wasted, but I learned and I had fun, so it's not the worst way outcome. It's very rewarding to be able to modify my GUI environment so easily to scratch a real itch and solve a real problem; this is one of the main reasons I continue to use Linux.

It's also quite frustrating to program in a frameworky system where you don't control your own entrypoint, and where the execution environment eats all of your errors.

Lastly, from a design perspective, this was a big usability failure for my Linux distribution. Distributions have to cater to all sorts of users, from someone putting together a minimal distribution for esoterically small devices to someone running a run-of-the-mill workstation like myself. Any other consumer operating system would have pulled in these packages when I installed nvidia-settings and the binary GPU driver; to not do so is to leave me with hardware that seems like it's not fully supported.